10 Unsolved Mysteries Of The Brain

10 Unsolved Mysteries Of The Brain

From: http://discovermagazine.com/2007/aug/unsolved-brain-mysteries

Jul 31, 2007

What we know—and don’t know—about how we think.

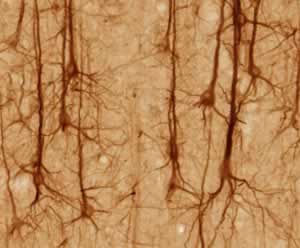

Of all the objects in the universe, the human brain is the most complex: There are as many neurons in the brain as there are stars in the Milky Way galaxy. So it is no surprise that, despite the glow from recent advances in the science of the brain and mind, we still find ourselves squinting in the dark somewhat. But we are at least beginning to grasp the crucial mysteries of neuroscience and starting to make headway in addressing them. Even partial answers to these 10 questions could restructure our understanding of the roughly three-pound mass of gray and white matter that defines who we are

1. How is information coded in neural activity?

Neurons, the specialized cells of the brain, can produce brief spikes of voltage in their outer membranes. These electrical pulses travel along specialized extensions called axons to cause the release of chemical signals elsewhere in the brain. The binary, all-or-nothing spikes appear to carry information about the world: What do I see? Am I hungry? Which way should I turn? But what is the code of these millisecond bits of voltage? Spikes may mean different things at different places and times in the brain. In parts of the central nervous system (the brain and spinal cord), the rate of spiking often correlates with clearly definable external features, like the presence of a color or a face. In the peripheral nervous system, more spikes indicates more heat, a louder sound, or a stronger muscle contraction.

As we delve deeper into the brain, however, we find populations of neurons involved in more complex phenomena, like reminiscence, value judgments, simulation of possible futures, the desire for a mate, and so on—and here the signals become difficult to decrypt. The challenge is something like popping the cover off a computer, measuring a few transistors chattering between high and low voltage, and trying to guess the content of the Web page being surfed.

It is likely that mental information is stored not in single cells but in populations of cells and patterns of their activity. However, it is currently not clear how to know which neurons belong to a particular group; worse still, current technologies (like sticking fine electrodes directly into the brain (http://discovermagazine.com/topics/mind-brain/machine-brain-connections)) are not well suited to measuring several thousand neurons at once. Nor is it simple to monitor the connections of even one neuron: A typical neuron in the cortex receives input from some 10,000 other neurons.

Although traveling bursts of voltage can carry signals across the brain quickly, those electrical spikes may not be the only—or even the main—way that information is carried in nervous systems. Forward-looking studies are examining other possible information couriers: glial cells (http://www.sfn.org/index.cfm?pagename=brainBriefings_astrocytes) (poorly understood brain cells that are 10 times as common as neurons), other kinds of signaling mechanisms between cells (such as newly discovered gases and peptides), and the biochemical cascades that take place inside cells

2. How are memories stored and retrieved?

When you learn a new fact, like someone’s name, there are physical changes in the structure of your brain. But we don’t yet comprehend exactly what those changes are, how they are orchestrated across vast seas of synapses and neurons, how they embody knowledge, or how they are read out decades later for retrieval.

One complication is that there are many kinds of memories. The brain seems to distinguish short-term memory (remembering a phone number just long enough to dial it) from long-term memory (what you did on your last birthday). Within long-term memory, declarative memories (like names and facts) are distinct from nondeclarative memories (riding a bicycle, being affected by a subliminal message), and within these general categories are numerous subtypes. Different brain structures seem to support different kinds of learning and memory; brain damage can lead to the loss of one type without disturbing the others.

Nonetheless, similar molecular mechanisms may be at work in these memory types. Almost all theories of memory propose that memory storage depends on synapses, the tiny connections between brain cells. When two cells are active at the same time, the connection between them strengthens (http://discovermagazine.com/2007/brain/cogitator); when they are not active at the same time, the connection weakens. Out of such synaptic changes emerges an association. Experience can, for example, fortify the connections between the smell of coffee, its taste, its color, and the feel of its warmth. Since the populations of neurons connected with each of these sensations are typically activated at the same time, the connections between them can cause all the sensory associations of coffee to be triggered by the smell alone.

But looking only at associations—and strengthened connections between neurons—may not be enough to explain memory. The great secret of memory is that it mostly encodes the relationships between things more than the details of the things themselves. When you memorize a melody, you encode the relationships between the notes, not the notes per se, which is why you can easily sing the song in a different key.

Memory retrieval is even more mysterious than storage. When I ask if you know Alex Ritchie, the answer is immediately obvious to you, and there is no good theory to explain how memory retrieval can happen so quickly. Moreover, the act of retrieval can destabilize the memory. When you recall a past event, the memory becomes temporarily susceptible to erasure. Some intriguing recent experiments show it is possible to chemically block memories from reforming during that window, suggesting new ethical questions that require careful consideration.

3. What does the baseline activity in the brain represent?

Neuroscientists have mostly studied changes in brain activity that correlate with stimuli we can present in the laboratory, such as a picture, a touch, or a sound. But the activity of the brain at rest (http://www.pnas.org/cgi/content/abstract/98/2/676)—its “baseline” activity—may prove to be the most important aspect of our mental lives. The awake, resting brain uses 20 percent of the body’s total oxygen, even though it makes up only 2 percent of the body’s mass. Some of the baseline activity (http://www.sciencemag.org/cgi/content/abstract/315/5810/393) may represent the brain restructuring knowledge in the background, simulating future states and events, or manipulating memories. Most things we care about—reminiscences, emotions, drives, plans, and so on—can occur with no external stimulus and no overt output that can be measured.

One clue about baseline activity comes from neuroimaging experiments (http://www.sciam.com/article.cfm?chanID=sa003&articleID=375DF6ED-E7F2-99DF-35A4B6CADBFC701D&ref=rss), which show that activity decreases in some brain areas just before a person performs a goal-directed task. The areas that decrease are the same regardless of the details of the task, hinting that these areas may run baseline programs during downtime, much as your computer might run a disk-defragmenting program only while the resources are not needed elsewhere.

In the traditional view of perception, information from the outside world pours into the senses, works its way through the brain, and makes itself consciously seen, heard, and felt. But many scientists are coming to think that sensory input may merely revise ongoing internal activity in the brain. Note, for example, that sensory input is superfluous for perception: When your eyes are closed during dreaming, you still enjoy rich visual experience. The awake state may be essentially the same as the dreaming state, only partially anchored by external stimuli. In this view, your conscious life is an awake dream.

The awake state may be essentially the same as the dreaming state. In this view, your conscious life is an awake dream.

4. How do brains simulate the future?

When a fire chief encounters a new blaze, he quickly makes predictions about how to best position his men. Running such simulations of the future—without the risk and expense of actually attempting them—allows “our hypotheses to die in our stead,” as philosopher Karl Popper put it. For this reason, the emulation of possible futures is one of the key businesses that intelligent brains invest in.

Yet we know little about how the brain’s future simulator works because traditional neuroscience technologies are best suited for correlating brain activity with explicit behaviors, not mental emulations. One idea suggests that the brain’s resources are devoted not only to processing stimuli and reacting to them (watching a ball come at you) but also to constructing an internal model of that outside world and extracting rules for how things tend to behave (knowing how balls move through the air). Internal models may play a role not only in motor acts, like catching, but also in perception. For example, vision draws on significant amounts of information in the brain, not just on input from the retina. Many neuroscientists have suggested over the past few decades that perception arises not simply by building up bits of data through a hierarchy but rather by matching incoming sensory data against internally generated expectations.

But how does a system learn to make good predictions about the world? It may be that memory exists only for this purpose. This is not a new idea: Two millennia ago, Aristotle and Galen emphasized memory as a tool (http://classics.mit.edu/Aristotle/memory.html) in making successful predictions for the future. Even your memories about your life may come to be understood as a special subtype of emulation, one that is pinned down and thus likely to flow in a certain direction

5. What are emotions?

We often talk about brains as information-processing systems, but any account of the brain that lacks an account of emotions, motivations, fears, and hopes is incomplete. Emotions are measurable physical responses to salient stimuli: the increased heartbeat and perspiration that accompany fear, the freezing response of a rat (http://discovermagazine.com/2007/feb/toxoplasma-gondii-culture-sex-ratio) in the presence of a cat, or the extra muscle tension that accompanies anger. Feelings, on the other hand, are the subjective experiences that sometimes accompany these processes: the sensations of happiness, envy, sadness, and so on. Emotions seem to employ largely unconscious machinery—for example, brain areas involved in emotion will respond to angry faces that are briefly presented and then rapidly masked, even when subjects are unaware of having seen the face. Across cultures the expression of basic emotions is remarkably similar, and as Darwin observed, it is also similar across all mammals. There are even strong similarities in physiological responses among humans, reptiles, and birds when showing fear, anger, or parental love.

Modern views propose that emotions are brain states that quickly assign value to outcomes and provide a simple plan of action. Thus, emotion can be viewed as a type of computation, a rapid, automatic summary that initiates appropriate actions. When a bear is galloping toward you, the rising fear directs your brain to do the right things (determining an escape route) instead of all the other things it could be doing (rounding out your grocery list). When it comes to perception, you can spot an object more quickly if it is, say, a spider rather than a roll of tape. In the realm of memory, emotional events are laid down differently by a parallel memory system involving a brain area called the amygdala.

One goal of emotional neuroscience is to understand the nature of the many disorders of emotion, depression being the most common and costly. Impulsive aggression and violence are also thought to be consequences of faulty emotion regulation.

6. What is intelligence?

Intelligence comes in many forms, but it is not known what intelligence—in any of its guises—means biologically. How do billions of neurons work together to manipulate knowledge, simulate novel situations, and erase inconsequential information? What happens when two concepts “fit” together and you suddenly see a solution to a problem? What happens in your brain when it suddenly dawns on you that the killer in the movie is actually the unsuspected wife? Do intelligent people store knowledge in a way that is more distilled, more varied, or more easily retrievable?

We all grew up with the near-future promise of smart robots (http://discovermagazine.com/topics/technology/robots), but today we have little better than the Roomba robotic vacuum cleaner. What went wrong? (http://discovermagazine.com/2007/feb/jetpack-future-technologies) There are two camps for explaining the weak performance of artificial intelligence: Either we do not know enough of the fundamental principles of brain function, or we have not simulated enough neurons working together. If the latter is true, that’s good news: Computation gets cheaper and faster each year, so we should not be far from enjoying life with Asimovian robots who can effectively tend our households. Yet most neuroscientists recognize how distant we are from that dream. Currently, our robots are little more intelligent than sea slugs, and even after decades of clever research, they can barely distinguish figures from a background at the skill level of an infant.

Recent experiments explore the possible relationship of intelligence to the capacity of short-term memory, the ability to quickly resolve cognitive conflict, or the ability to store stronger associations between facts; the results are not yet conclusive. Many other possibilities—better restructuring of stored information, more parallel processing, or superior emulation of possible futures—have not yet been probed by experiments.

Intelligence may not be underpinned by a single mechanism or a single neural area. Whatever intelligence is, it lies at the heart of what is special about Homo sapiens. Other species are hardwired to solve particular problems, while our ability to abstract allows us to solve an open-ended series of problems. This means that studies of intelligence in mice and monkeys may be barking up the wrong family tree.

7. How is time represented in the brain?

Hundred-yard dashes begin with a gunshot rather than a strobe light because your brain can react more quickly to a bang than to a flash. Yet as soon as we get outside the realm of motor reactions and into the realm of perception (what you report that you saw and heard), the story changes. When it comes to awareness, the brain goes through a good deal of trouble to synchronize incoming signals that are processed at very different speeds.

For example, snap your fingers in front of you. Although your auditory system processes information about the snap about 30 milliseconds faster than your visual system, the sight of your fingers and the sound of the snap seem simultaneous. Your brain is employing fancy editing tricks to make simultaneous events in the world feel simultaneous to you, even when the different senses processing the information would individually swear otherwise.

For a simple example of how your brain plays tricks with time, look in the mirror at your left eye. Now shift your gaze to your right eye. Your eye movements take time, of course, but you do not see your eyes move. It is as if the world instantly made the transition from one view to the next. What happened to that little gap in time? For that matter, what happens to the 80 milliseconds of darkness you should see every time you blink your eyes? Bottom line: Your notion of the smooth passage of time is a construction of the brain. Clarifying the picture of how the brain normally solves timing problems should give insight into what happens when temporal calibration goes wrong, as may happen in the brains of people with dyslexia. Sensory inputs that are out of sync also contribute to the risk of falls in elderly patients.

We grew up with the near-future promise of smart robots, but today we have little better than the Roomba robotic vacuum cleaner. What went wrong?

8. Why do brains sleep and dream?

One of the most astonishing aspects of our lives is that we spend a third of our time in the strange world of sleep. Newborn babies spend about twice that. It is inordinately difficult to remain awake for more than a full day-night cycle. In humans, continuous wakefulness of the nervous system results in mental derangement; rats deprived of sleep for 10 days die (http://www.ncbi.nlm.nih.gov/sites/entrez?cmd=Retrieve&db=PubMed&list_uids=2928622&dopt=Citation). All mammals sleep, reptiles and birds sleep, and voluntary breathers like dolphins sleep (http://link.brightcove.com/services/link/bcpid370512060/bclid533256427/bctid741862022) with one brain hemisphere dormant at a time. The evolutionary trend is clear, but the function of sleep is not.

The universality of sleep, even though it comes at the cost of time and leaves the sleeper relatively defenseless, suggests a deep importance. There is no universally agreed-upon answer, but there are at least three popular (and nonexclusive) guesses. The first is that sleep is restorative, saving and replenishing the body’s energy stores. However, the high neural activity during sleep suggests there is more to the story. A second theory proposes that sleep allows the brain to run simulations of fighting, problem solving, and other key actions before testing them out in the real world. A third theory—the one that enjoys the most evidence—is that sleep plays a critical role in learning and consolidating memories and in forgetting inconsequential details. In other words, sleep allows the brain to store away the important stuff and take out the neural trash.

Recently, the spotlight has focused on REM sleep as the most important phase for locking memories into long-term encoding. In one study, rats were trained to scurry around a track for a food reward. The researchers recorded activity in the neurons known as place cells, which showed distinct patterns of activity depending upon the rats’ location on the track. Later, while the rats dropped off into REM sleep, the recordings continued. During this sleep, the rats’ place cells often repeated the exact same pattern of activity that was seen when the animals ran. The correlation was so close, the researchers claimed, that as the animal “dreamed,” they could reconstruct where it would be on the track if it had been awake—and whether the animal was dreaming of running or standing still. The emerging idea is that information replayed during sleep might determine which events we remember later. Sleep, in this view, is akin to an off-line practice session. In several recent experiments, human subjects performing difficult tasks improved their scores between sessions on consecutive days, but not between sessions on the same day, implicating sleep in the learning process.

Understanding how sleeping and dreaming are changed by trauma, drugs, and disease—and how we might modulate our need for sleep—is a rich field to harvest for future clues.

9. How do the specialized systems of the brain integrate with one another?

To the naked eye, no part of the brain’s surface looks terribly different from any other part. But when we measure activity, we find that different types of information lurk in each region of the neural territory. Within vision, for example, separate areas process motion, edges, faces, and colors. The territory of the adult brain is as fractured as a map of the countries of the world.

Now that neuroscientists have a reasonable idea of how that territory is divided, we find ourselves looking at a strange assortment of brain networks involved with smell, hunger, pain, goal setting, temperature, prediction, and hundreds of other tasks. Despite their disparate functions, these systems seem to work together seamlessly. There are almost no good ideas about how this occurs.

Nor is it understood how the brain coordinates its systems so rapidly. The slow speed of spikes (they travel about one foot per second in axons that lack the insulating sheathing called myelin) is one hundred-millionth the speed of signal transmission in digital computers. Yet a human can recognize a friend almost instantaneously, while digital computers are slow—and usually unsuccessful—at face recognition. How can an organ with such slow parts operate so quickly? The usual answer is that the brain is a parallel processor, running many operations at the same time. This is almost certainly true, but what slows down parallel-processing digital computers is the next stage of operations, where results need to be compared and decided upon. Brains are amazingly fast at this. So while the brain’s ability to do parallel processing is impressive, its ability to rapidly synthesize those parallel processes into a single, behavior-guiding output is at least as significant. An animal running must go left or right around a tree; it cannot do both.

There is no special anatomical location in the brain where information from all the different systems converges; rather, the specialized areas all interconnect with one another, forming a network of parallel and recurring links. Somehow, our integrated image of the world emerges from this complex labyrinthine network of brain structures. Surprisingly little study has been done on large, loopy networks like the ones in the brain—probably in part because it is easier to think about brains as tidy assembly lines than as dynamic networks

10. What is consciousness?

Think back to your first kiss. The experience of it may pop into your head instantly. Where was that memory before you became conscious of it? How was it stored in your brain before and after it came into consciousness? What is the difference between those states

An explanation of consciousness is one of the major unsolved problems of modern science. It may not turn out to be a single phenomenon; nonetheless, by way of a preliminary target, let’s think of it as the thing that flickers on when you wake up in the morning that was not there, in the exact same brain hardware, moments before.

Neuroscientists believe that consciousness emerges from the material stuff of the brain primarily because even very small changes to your brain (say, by drugs or disease) can powerfully alter your subjective experiences. The heart of the problem is that we do not yet know how to engineer pieces and parts such that the resulting machine has the kind of private subjective experience that you and I take for granted. If I give you all the Tinkertoys in the world and tell you to hook them up so that they form a conscious machine, good luck. We don’t have a theory yet of how to do this; we don’t even know what the theory will look like.

One of the traditional challenges to consciousness research is studying it experimentally. It is probable that at any moment some active neuronal processes correlate with consciousness, while others do not. The first challenge is to determine the difference between them. Some clever experiments are making at least a little headway. In one of these, subjects see an image of a house in one eye and, simultaneously, an image of a cow in the other. Instead of perceiving a house-cow mixture, people perceive only one of them (http://www.nature.com/nature/journal/v380/n6575/abs/380621a0.html). Then, after some random amount of time, they will believe they’re seeing the other, and they will continue to switch slowly back and forth. Yet nothing about the visual stimulus changes; only the conscious experience changes. This test allows investigators to probe which properties of neuronal activity correlate with the changes in subjective experience.

The mechanisms underlying consciousness could reside at any of a variety of physical levels: molecular, cellular, circuit, pathway, or some organizational level not yet described. The mechanisms might also be a product of interactions between these levels. One compelling but still speculative notion is that the massive feedback circuitry of the brain is essential to the production of consciousness.

In the near term, scientists are working to identify the areas of the brain that correlate with consciousness. Then comes the next step: understanding why they correlate. This is the so-called hard problem of neuroscience, and it lies at the outer limit of what material explanations will say about the experience of being human.